Month: September 2015

Structuralist Methods in a Post-Structuralist Humanities

The topic of this conference (going on now!) at Utrecht University raises an issue similar to the one raised in my article at LSE’s Impact Blog: DH’ists have been brilliant at mining data but not always so brilliant at pooling data to address the traditional questions and theories that interest humanists. Here’s the conference description (it focuses specifically on DH and history):

Across Europe, there has been much focus on digitizing historical collections and on developing digital tools to take advantage of those collections. What has been lacking, however, is a discussion of how the research results provided by such tools should be used as a part of historical research projects. Although many developers have solicited input from researchers, discussion between historians has been thus far limited.

The workshop seeks to explore how results of digital research should be used in historical research and to address questions about the validity of digitally mined evidence and its interpretation.

And here’s what I said in my Impact Blog article, using as an example my own personal hero’s research in literary geography:

[Digital humanists] certainly re-purpose and evoke one another’s methods, but to date, I have not seen many papers citing, for example, Moretti’s actual maps to generate an argument not about methods but about what the maps might mean. Just because Moretti generated these geographical data does not mean he has sole ownership over their implications or their usefulness in other contexts.

I realize now that the problem is still one of method—or, more precisely, of method incompatibility. And the conference statement above gets to the heart of it.

Mining results with quantitative techniques is ultimately just data gathering; the next and more important step is to build theories and answer questions with that data. The problem is, in the humanities, that moving from data gathering to theory building forces the researcher to move between two seemingly incommensurable ways of working. Quantitative data mining is based on strict structuralist principles, requiring categorization and sometimes inflexible ontologies; humanistic theories about history or language, on the other hand, are almost always post-structuralist in their orientation. Even if we’re not talking Foucault or Derrida, the tendency in the humanities is to build theories that reject empirical readings of the world that rely on strict categorization. The 21st century humanistic move par excellence is to uncover the influence of “socially constructed” categories on one’s worldview (or one’s experimental results).

On Twitter, Melvin Wevers brings up the possibility of a “post-structuralist corpus linguistics.” To which James Baker and I replied that that might be a contradiction in terms. To my knowledge, there is no corpus project in existence that could be said to enact post-structuralist principles in any meaningful way. Such a project would require a complete overhaul of corpus technology from the ground up.

So where does that leave the digital humanities when it comes to the sorts of questions that got most of us interested in the humanities in the first place? Is DH condemned forever to gather interesting data without ever building (or challenging) theories from that data? Is it too much of an unnatural vivisection to insert structural, quantitative methods into a post-structuralist humanities?

James Baker throws an historical light on the question. When I said that post-structuralism and corpus linguistics are fundamentally incommensurable, he replied with the following point:

@SethLargo @melvinwevers And it makes sense, right. As post-structuralism was ~ a reaction to quant social science methods, cliometrics etc.

— James Baker (@j_w_baker) September 14, 2015

And he suggested that in his own work, he tries to follow this historical development:

@melvinwevers I enjoy being consciously positivist then subjecting my positivism to sustained critique. Helps me get somewhere #beyondmining

— James Baker (@j_w_baker) September 14, 2015

Structuralism/post-structuralism exists (or should exist) in dialectical tension. The latter is a real historical response to the former. It makes sense, then, to enact this tension in DH research. Start out as a positivist, end as a critical theorist, then go back around in a recursive process. This is probably what anyone working with DH methods probably does already. I think Baker’s point is that my “problem” posed above (structuralist methods in a post-structuralist humanities) isn’t so much a problem as a tension we need to be comfortable living with.

Not all humanistic questions or theories can be meaningfully tackled with structuralist methods, but some can. Perhaps a first step toward enacting the structuralist/post-structuralist dialectical tension in research is to discuss principles regarding which topics are or are not “fair game” for DH methods. Another step is going to be for skeptical peer reviewers not to balk at structuralist methods by subtly trying to remove them with calls for more “nuance.” Searching out the nuances of an argument—refining it—is the job of multiple researchers across years of coordinated effort. Knee-jerk post-structuralist critiques (or requests for an author to put them in her article) are unhelpful when a researcher has consciously chosen to utilize structuralist methods.

Some questions about centrality measurements in text networks

This .gif alternates between a text network calculated for betweenness centrality (smaller nodes overall) and one calculated for degree centrality (larger nodes). It’s normal to discover that most nodes in a network possess higher degree than betweenness centrality. However, in the context of human language, what precisely is signified by this variation? And is it significant?

Another way of posing the question is to ask what exactly one discovers about a string of words by applying centrality measurements to each word as though it were a node in a network, with edges between words to the right or left of it. The networks in the .gif visualize variation between two centrality measurements, but there are dozens of others that might have been employed. Which centrality measurements—if any—are best suited for textual analysis? When centrality measurements require the setting of parameters, what should those parameters be, and are they dependent on text size? And ultimately, what literary or rhetorical concept is “centrality” a proxy for? The mathematical core of a centrality measurement is a distance matrix, so what do we learn about a text when calculating word proximity (and frequency of proximity, if calculating edge weight)? Do we learn anything that would have any relevance to anyone since the New Critics?

It is not my goal (yet) to answer these questions but merely to point out that they need answers. DH researchers using networks need to come to terms with the linear algebra that ultimately generates them. Although a positive correlation should theoretically exist between different centrality measurements, differences do remain, and knowing which measurement to utilize in which case should be a matter of critical debate. For those using text networks, a robust defense of network application in general is needed. What is gained by thinking about text as a word network?

In an ideal case, of course, the language of social network theory transfers remarkably well to the language of rhetoric and semantics. Here is Linton C. Freeman discussing the notion of centrality in its most basic form:

Although it has never been explicitly stated, one general intuitive theme seems to have run through all the earlier thinking about point centrality in social networks: the point at the center of a star or the hub of a wheel, like that shown in Figure 2, is the most central possible position. A person located in the center of a star is universally assumed to be structurally more central than any other person in any other position in any other network of similar size. On the face of it, this intuition seems to be natural enough. The center of a star does appear to be in some sort of special position with respect to the overall structure. The problem is, however, to determine the way or ways in which such a position is structurally unique.

Previous attempts to grapple with this problem have come up with three distinct structural properties that are uniquely possessed by the center of a star. That position has the maximum possible degree; it falls on the geodesics between the largest possible number of other points and, since it is located at the minimum distance from all other points, it is maximally close to them. Since these are all structural properties of the center of a star, they compete as the defining property of centrality. All measures have been based more or less directly on one or another of them . . .

Addressing the notions of degree and betweenness centrality, Freeman says the following:

With respect to communication, a point with relatively high degree is somehow “in the thick of things”. We can speculate, therefore, that writers who have defined point centrality in terms of degree are responding to the visibility or the potential for activity in communication of such points.

As the process of communication goes on in a social network, a person who is in a position that permits direct contact with many others should begin to see himself and be seen by those others as a major channel of information. In some sense he is a focal point of communication, at least with respect to the others with whom he is in contact, and he is likely to develop a sense of being in the mainstream of information flow in the network.

At the opposite extreme is a point of low degree. The occupant of such a position is likely to come to see himself and to be seen by others as peripheral. His position isolates him from direct involvement with most of the others in the network and cuts him off from active participation in the ongoing communication process.

The “potential” for a node’s “activity in communication” . . . A “position that permits direct contact” between nodes . . . A “major channel of information” or “focal point of communication” that is “in the mainstream of information flow.” If the nodes we are talking about are words in a text, then it is straightforward (I think) to re-orient our mental model and think in terms of semantic construction rather than interpersonal communication. In other posts, I have attempted to adopt degree and betweenness centrality to a discussion of language by writing that, in a textual network, a word with high degree centrality is essentially a productive creator of bigrams but not a pathway of meaning. A word with high betweenness centrality, on the other hand, is a pathway of meaning: it is a word whose significations potentially slip as it is used first in this and next in that context in a text.

Degree and betweenness centrality—in this ideal formation—are therefore equally interesting measurements of centrality in a text network. Each points you toward interesting aspects of a text’s word usage.

However, most text networks are much messier than the preceding description would lead you to believe. Freeman, again, on the reality of calculating something as seemingly basic as betweenness centrality:

Determining betweenness is simple and straightforward when only one geodesic connects each pair of points, as in the example above. There, the central point can more or less completely control communication between pairs of others. But when there are several geodesics connecting a pair of points, the situation becomes more complicated. A point that falls on some but not all of the geodesics connecting a pair of others has a more limited potential for control.

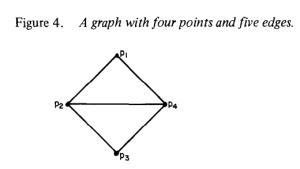

In the graph of Figure 4, there are two geodesics linking pi with p3, one EJ~U p2 and one via p4. Thus, neither p2 nor p4 is strictly between p, and p3 and neither can control their communication. Both, however, have some potential for control.

Calculating betweenness centrality in this (still simple) case requires recourse to probabilities. A probabilistic centrality measure is not necessarily less valuable; however, the concept should give you an idea of the complexities involved in something as ostensibly straightforward as determining which nodes in a network are most “central.” Put into the context of a text network, a lot of intellectual muscle would need to be exerted to convert such a probability measurement into the language of rhetoric and literature (then again, as I write that . . .).

As I said, there is reading to be done, mathematical concepts to comprehend, and debates to be had. And ultimately, what we are after perhaps isn’t centrality measurements at all but metrics for node (word) influence. For example, if we assume (as I think we can) that betweenness centrality is a better metric of node influence than degree centrality, then the .gif above clearly demonstrates that degree centrality may be a relatively worthless metric—it gives you a skewed sense of which words exert the most control over a text. What’s more, node influence is a concept sensitive to scale. Though centrality measurements may inform us about influential nodes across a whole network, they may underestimate the local or temporal influence of less central nodes. Centrality likely correlates with node influence but I doubt it is determinative in all cases. Accessing text (from both a writer’s and a reader’s perspective) is ultimately a word-by-word or phrase-by-phrase phenomenon, so a robust text network analysis needs to consider local influence. A meeting of network analysis and reader response theory may be in order. Perhaps we are even wrong to expunge functional words from network analysis. As Franco Moretti has demonstrated, analysis of words as seemingly disposable as ‘of’ and ‘the’ can lead to surprising conclusions. We leave these words out of text networks simply because they create messy, spaghetti-monster visualizations. The underlying math, however, will likely be more informative, once we learn how to read it.