Chomsky’s insight is that language possesses structure independent of meaning. Take the examples below:

(1a) There seems to be a girl in the garden

(1b) ??There seems to be Kate in the garden

(1c) ??There seems to be the boy in the garden

(1d) *There seems to be him in the garden

The only difference between these sentences is the noun in the garden—a girl, Kate, the boy, and him. So why does (1a) sound perfectly fine while the others sound off? Why does (1d) sound thoroughly ungrammatical? There must be structural elements involved here that are not visible in the words themselves.

Another, famous example:

(2a) Colorless green ideas sleep furiously

(2b) *Colorless ideas green furiously sleep

(2c) *Colorless green ideas sleeps furiously

Each sentence is meaningless. Yet most English speakers will agree that (2a) is fine while (2b) is word salad, and that in (2c), there’s something wrong with the verb. Again, the only reason why a meaningless sentence can still sound wrong or right is that the structure of language is at least partially independent of its meaning. From this hypothesis follows the concept of universal grammar—all human groups exhibit language, and if languages exhibit structure independent of meaning, then at a deep level, all human languages, beneath their superficial diversity, might operate upon the same structures. The goal of “Chomskyan” or “formalist” linguistic analysis is to describe the structure of this universal grammar (UG).

An adequate structural model of a language (and, eventually, of all languages) will consist of rules that can generate the grammatical sentences in the language while at the same time barring ungrammatical sentences from being generated. For the last several decades, the work of linguistics in North America and much of Europe has centered around discoveringand describing these generative rules. The problem is, that when one scholar has got a rule just right (it correctly predicts which sentences will be grammatical and which ones will be filtered out as ungrammatical), some other scholar pops up with new data showing a grammatical or ungrammatical sentence that shouldn’t exist according to the rule. And so the rule gets re-worked, made more complex, or abandoned in favor of some other rule . . . which awaits its destruction at the hands of some bizarre sentence that should or should not be grammatical.

It’s obvious that languages have structure. What’s not so obvious is that linguistic structure can be described with a closed system of rules. In the humble opinion of this blogger, trying to model UG is like trying to herd cats. Maybe you can herd most of them, but there’s always a few that just hiss and run away, and their existence seems to undermine the premise of the whole endeavor.

Take reflexive pronouns, for example. If any linguistic element can be described with robustly predictive rules, it should be reflexives. By definition, reflexives are structural: they must refer to (i.e., be co-indexed with) some other noun phrase (NP) in a sentence; otherwise, they sound ungrammatical, as in *Himself went to the store.

It has long been noted that reflexive pronouns in English and many other languages appear in complementary distribution with personal pronouns, which don’t need to co-refer with another noun phrase in a sentence:

(3a) Michael loves himself

(3b) Michael loves him

In (3a), himself can only refer to Michael. In (3b), him cannot refer to Michael; it must refer to some NP other than Michael, an NP which needn’t exist in the same sentence. If you want him to refer to Michael, you don’t use him, you use reflexive himself.

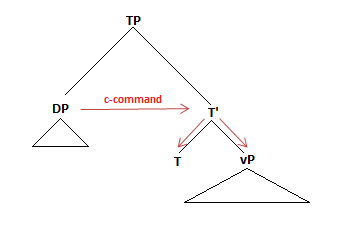

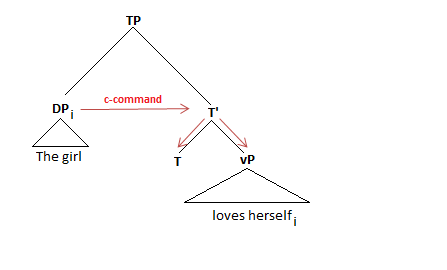

This distribution of reflexives and personal pronouns is the basis of Chomsky’s Binding Theory, specifically Binding Principles A and B, which state, respectively, that reflexives must be c-commanded by their co-indexed NP within some local domain and that pronouns cannot be c-commanded by their co-indexed NP within some local domain. Defining “domain” is tricky. Once upon a time, it appeared that the domain was the clause:

(4) Michael said that he loves Mary

In (4), the pronoun he is indeed c-commanded by its co-indexed NP, Michael, but the sentence is still grammatical. Apparently, Binding Principle A only applies intra-clausally. The “domain” for the binding principles must therefore be the clause.

Binding Principle A: A reflexive pronoun must be c-commanded by its co-indexed NP within the clause that immediately contains both the reflexive and its antecedent.

Binding Principle B: A pronoun must not be c-commanded by a co-indexed NP within the same clause.

An NP that is both c-commanded by and co-indexed with another NP is said to be “bound” by the second NP, its antecedent. Binding Principles A and B can by glossed in simpler terms by saying that a reflexive pronoun must be bound within its clause, and a personal pronoun must not be bound (or “must be free”) within its clause. As formulated, these rules correctly predict the grammaticality of many, many sentences cross-linguistically.

But not all of them:

(5) Michael loves his snake

The pronoun his is bound by Michael within the same clause. That’s a violation of Principle B. (5) should not be grammatical. But it’s grammatical. Something’s wrong with Principle B. And what about the example in (6):

(6) Mary thinks that the picture of herself look beautiful

The reflexive herself is in a separate clause from its binding NP, Mary. That’s a violation of Principle A. (6) should not be grammatical. But it is. Something’s wrong with Principle A, too.

Chomsky and others tried to tighten up the binding rules to account for these sentences by changing the definition of “domain.” I won’t go into all the details, but at the moment, standard linguistics textbooks describe the binding rules in the following way (these definitions come from Carnie):

Binding Principle A: One copy of a reflexive in a chain must be bound within the smallest CP or DP containing it anda potential antecedent.

Binding Principle B: A pronoun must be free (not bound) within the smallest CP or DP containing it but not containing a potential antecedent. If no such category is found, the pronoun must be free within the root CP.

Clearly, the only way to salvage the entire premise of the binding principles is to make them quite a bit more complicated. That’s not necessarily a mark against it. No one said linguistic structure would be simple or elegant.

However, these new and improved binding rules continue to rely on the notion that reflexives and pronouns will be bound or not bound within their domains. They also continue to predict that reflexives and pronouns will be in complementary distribution.

Damn:

(7) Grand ideas about himself occupy John all day

(8a) John boasted that the Queen had invited Lucie and himself for tea

(8b) John boasted that the Queen had invited Lucie and him for tea

(8a) and (8b) demonstrate that pronouns and reflexives, in this case, are not in complementary distribution. (7) provides an example of a reflexive that is not bound by its co-indexed NP—himself occurs before John. It looks like even our new and improved (and more complex) binding rules fail to predict which sentences will or will not be grammatical. These examples could easily be multiplied. And we haven’t even left English!

Of course, linguists continue to re-formulate binding rules that take the above examples into consideration. But in order to herd these cats, things get very complicated very quickly, and many of the papers formulating new binding rules (e.g., Reinhart and Reuland 1993) contain a lot of sentences that begin with “Suppose that . . .” The suppositions may indeed be correct, and, as I said, there was never a guarantee that the rules of UG would be simple. However, for the past 40 years, North American linguistics has been a constant complication of older rules with newer rules as more data (especially cross-linguistic data) comes to the field’s attention. This process of formulation and re-formulation in light of new data, which I have simplistically illustrated here with Binding Principles, is exactly what linguists do. This process may indeed be expanding our knowledge about the structures of languages and UG. I think it has provided a lot of insight into linguistic structures. But it seems like there can never be closure. There will always be another piece of data to demonstrate that a rule is incomplete or simply incorrect. And unfortunately, the impossibility that the rules being amassed will ever reach closure seems to undermine the entire process. One can’t help agreeing, if only momentarily, with John McWhorter’s warning that the search for the structures of Universal Grammar might look as silly to future scholars as the search for phlogiston looks to us today.

(Or it might not. I don’t know. In the end, the argument I made in the paragraph above is similar to the argument against trying to pin down polygenic traits in humans—it’s just too complicated. And that’s never a productive stance to take.)

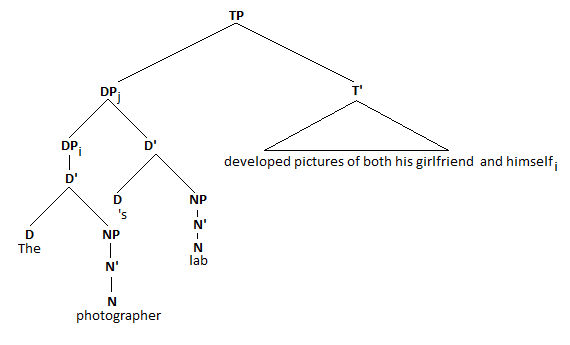

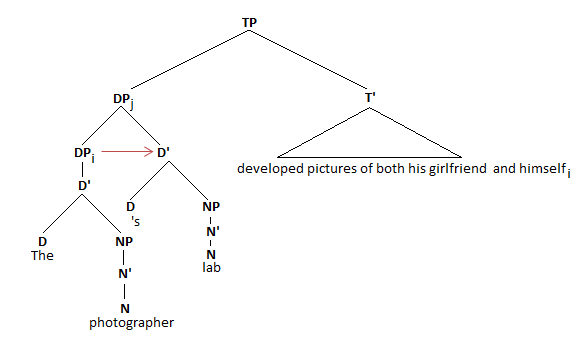

In the sentence The girl loves herself, the anaphor is co-indexed with and c-commanded by its antecedent DP. Thus, the sentence is grammatical. The anaphor cannot refer to anyone but the girl. If you wanted the anaphor to refer to everything but the girl—that is, if you added a different index to the anaphor—then you would need to change the anaphor to a pronoun, it or her, to make the sentence grammatical: The girl loves it.

In the sentence The girl loves herself, the anaphor is co-indexed with and c-commanded by its antecedent DP. Thus, the sentence is grammatical. The anaphor cannot refer to anyone but the girl. If you wanted the anaphor to refer to everything but the girl—that is, if you added a different index to the anaphor—then you would need to change the anaphor to a pronoun, it or her, to make the sentence grammatical: The girl loves it.