A materialist theory of literary form will ultimately have to concern itself with the organic processes of reading and composition, but the way to do this is through empirical study of readers and writers, not more interpretation of texts, or armchair ruminations. –Cozma Shalizi in a response to Franco Moretti’s Graps, Maps, Trees (128)

Janet Emig initiated the writing process movement when she published The Composing Processes of Twelfth Graders, an attempt to study writing in an empirical way (lower case e; no Lockean baggage implied) by closely observing and polling several high school seniors as they wrote essays. Today, the shortcomings of her study are obvious—the sample size was small, and she had no way to track granular textual changes as they were made in real time. However, despite its limitations, Emig’s work introduced an important assumption to the field of writing studies:

Writing is a natural, organic phenomenon that can be studied empirically.

Unfortunately for Emig and the process movement, writing studies was and is situated in an academic context that requires a sharp pedagogical focus, and the empirical study of writing has little to no educational value. Studying writers can tell us how people write, but it doesn’t necessarily tell us how to teach writing, academic or creative or otherwise.

Intimately tied to the pedagogical critique of the process movement was the political critique. In early studies of writing processes, certain contextual elements (read: race, gender, class) were ignored. Emig, for example, did not deeply address the racial differences of her subjects. Critics claimed that the study of writing processes would not pay enough attention to relevant cultural factors that affect how, where, and why people write. This critique was weak, however, because all empirical pursuits must by design bracket out certain contextual elements in its early stages. As the pursuit progresses and gathers knowledge, the causes and effects (if any) of various contextual factors can be coded and controlled for. Race, class, and gender are such factors—important ones at that—but we needn’t stop there (cross-linguistic differences would be first and foremost on my mind). Dozens of material factors must be taken into consideration when studying writing. Had the process movement not been abandoned, researchers would have gotten around to controlling for all of them.

Then there was the philosophical critique. The goal of studying writing is to build evidence-based theories about this unique human practice from a variety of angles—stylistic, material, cognitive, neuronal, linguistic—and eventually to see how these levels interact. (E.g., What areas of the brain are operational at various stages of writing and re-writing? Are small stylistic changes or large organizational changes more often influential of a text’s shape? How does textual cohesion emerge? What roles do vision and memory play in the way writers work with their texts on word processors? Do writers across languages and writing systems have completely different processes?) However, like many humanities disciplines, writing studies has been influenced by postmodernism and is thus adverse to data-driven, quantitative, empirical methods, and not interested in questions—like the ones above—that require these methods. Gary Olson typifies the philosophical critique when he writes that the process movement attempts to “systematize something [writing] that simply is not susceptible to systematization.”

Of course, no evidence is provided for this claim—but then, none is needed. It isn’t a claim at all. It is an a priori assumption, a statement of faith, designed to obviate any empirical work on the subject.

Ironically, the most valid critique of the process movement in writing studies was one that no one ever made: the technological critique. In the 1980s, when the process movement was jostling for academic legitimacy, researchers simply did not have access to the technology that could enable a more robust inquiry into the material, organic processes of writing.

Today, we do have access to that technology. What’s more, the postmodern zeitgeist has waned in its influence, and the political critique was never quite valid. The time seems right for a return, not necessarily to process theory, but to the assumptions it made about data-driven, quantitative, empirical studies of the way humans compose.

Scholars like Chris Anson, Richard Haswell, and Chuck Bazerman are leading the way. Haswell’s call for “RAD” research—replicable, aggregable, data-supported—is essentially a call for empiricism in writing studies. And Anson’s recent report on the use of eye-tracking devices to study writers at work demonstrates how new technologies will enable and benefit this empirical endeavor. Anson’s line of research could lead to major insights into the ways writers access their ‘textual memory’ in order to manage the many semantic strands that comprise any written text. Indeed, this is a perfect example of how technology and RAD methods can test old ideas in writing studies, confirm or complicate those ideas, and fill them in with data-driven details. In 1999, for example, Christina Haas wrote about the way writers manage their texts in Writing Technology: Studies in the Materiality of Literacy:

Clearly, writers interact constantly, closely, and in complex ways with their own texts. Through these interactions, they develop some understanding—some representation—of the text they have created or are creating . . . As the text gets longer and more ideas are introduced and developed, it becomes more difficult to hold an adequate representation in memory of that text, which is out of sight. (117, 121, qtd in Brooke’s Lingua Fracta)

In 2012, enter the eye-tracking software, which can show us where writers look, physically, to develop representations of their texts as they are constructed: What kinds of words or phrases do writers reference most often, as ‘anchors’ for their intellectual wanderings? What are the outside limits of textual vision? Where do writers focus their vision at different stages in the writing process—choosing words, writing sentences, organizing paragraphs, et cetera? Do accomplished writers use their eyes differently than novice writers? Do high-IQ individuals use their eyes less or more while writing; are their visual memories more robust, requiring less visual tracking to make sense of their texts’ cohesion?

Without eye-tracking devices and empirical methodologies, researchers could never hope to answer these and other questions. They would never even think to formulate them.

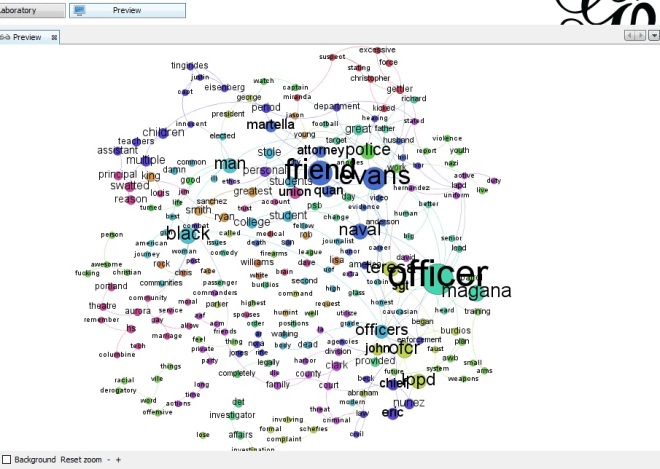

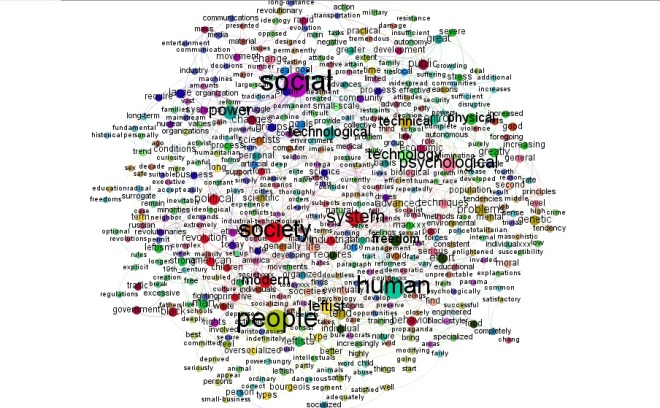

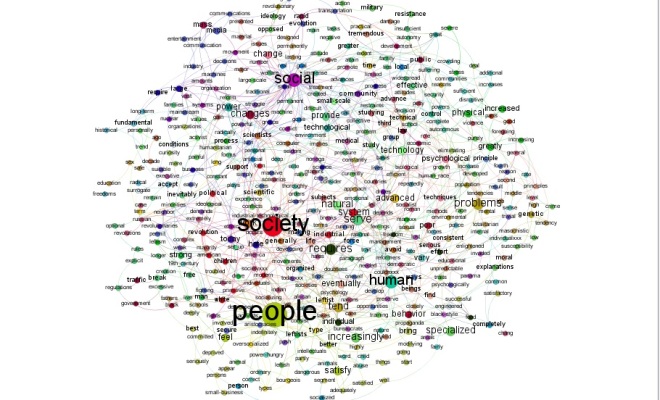

Outside the field of writing studies, researchers are already using technology to capture and study authorial processes. An IBM study used an application called history flow to study contributions in Wikipedia articles and how numerous contributions endure, change, or are phased out entirely over time. Ben Fry built an amazing visualization of the multiple changes Darwin made to On the Origin of Species across six editions. And on a lesser scale, Timothy Weninger created a time-lapse video that shows the writing of a research paper in various stages (I’m in the process of figuring out how to do something similar, using track changes in Word).

The interest in organic authorial processes extends beyond writing studies, so it boggles my mind that writing studies scholars aren’t at the forefront of this research, which, I grant, is in its early stages. Luckily, things are looking up for RAD, empirical research in writing studies. Now that we can start grounding our theories of composition in real data, it’s only a matter of time before we start gaining empirical insight into this strange, relatively recent human behavior that we call ‘writing’.